Laravel Packages: Creating My First - Part 2

The internals of Housekeeping: the packages that power it, the testing challenges I hit, and the architectural decisions that shaped the build.

So, part one was the "why" and the "what" - the itch, the scope, the tooling choices. This post is the "how", and honestly the more interesting half: the packages doing the heavy lifting under the hood, the problems I hit during development (some self-inflicted), and the architectural decisions that shaped the build.

The GitHub Client extraction

Early on I made the decision to wrap all GitHub API interactions in a dedicated Client class rather than calling GrahamCampbell\GitHub directly from the commands. This wasn't strictly necessary for a package this size, but it was a deliberate architectural choice that paid off quickly.

The graham-campbell/github package uses magic methods and dynamic API resolution under the hood. It works well in practice, but PHPStan has absolutely no idea what's going on - every call chain is effectively untyped. By isolating all of this behind my own Client class, I contained the @phpstan-ignore-next-line annotations to a single file rather than scattering them across every command. The commands themselves interact with a clean, typed interface, which is far easier to reason about (and to mock, as I'll get to later).

The client is intentionally thin - it doesn't handle errors, doesn't retry, doesn't cache. It resolves the authenticated username, calls the API, and returns the result. Error handling lives in the commands where the context exists to present useful feedback to the user. This separation meant I could test the commands by mocking the Client without needing to understand the internals of the GitHub API library, which turned out to be a significant time saver.

Here's a trimmed example showing the pattern - every method follows the same shape:

class Client

{

public function username(): string

{

/** @phpstan-ignore-next-line */

return GitHub::api('currentUser')->show()['login'];

}

/** @param array<int, string> $labels */

public function addLabels(string $repo, int $number, array $labels): void

{

/** @phpstan-ignore-next-line */

GitHub::api('issues')->labels()->add($this->username(), $repo, $number, $labels);

}

/** @param array<int, string> $labels */

public function removeLabels(string $repo, int $number, array $labels): void

{

foreach ($labels as $label) {

/** @phpstan-ignore-next-line */

GitHub::api('issues')->labels()->remove($this->username(), $repo, $number, $label);

}

}

}

One quirk worth noting: the GitHub API removes labels one at a time but can add them in batch. So removeLabels iterates while addLabels passes an array. Small inconsistencies like this are exactly why the wrapper exists - the commands don't need to know about API-level quirks.

laravel/prompts in practice

The interactive terminal UI is built entirely on laravel/prompts, and it's genuinely excellent for this kind of tool. The main housekeeping:list command uses a recursive navigation pattern - mainMenu() calls labelLoop() calls issueList() calls issueDetail(), and "back" simply calls the parent method again. It's not a formal state machine, just PHP's call stack doing the work. Slightly hacky? Perhaps. But for four screens it's perfectly readable and avoids pulling in a state management abstraction that would be overkill.

For repository selection I used suggest() (typeahead/autocomplete) since a developer might have dozens of repos, but select() (fixed dropdown) for labels and issues where the list is bounded. The distinction is small but it's the difference between a tool that feels considered and one that doesn't. Every API call is wrapped in spin() to show a loading indicator - GitHub's API isn't slow, but without the spinner there's an uncomfortable pause where the user doesn't know if the tool has hung or is just thinking.

One decision I went back and forth on was eager loading. Rather than making an API call per label, the command fetches all open issues in one request and filters client-side. This trades memory for speed - one round-trip instead of many. For the "Friday afternoon" use case where you want to quickly find something to work on, the speed matters more than the memory cost. If someone has thousands of open issues this might need revisiting, but for the intended use case it's the right call.

The final readonly decision and its consequences

I declared the main Housekeeping service class as final readonly. This was a deliberate design choice - the class has no mutable state, shouldn't be extended, and the readonly keyword communicates intent clearly. It's a singleton in the container, so immutability is important.

The consequence: Mockery can't mock final classes. PHP's class system doesn't allow it. The solution is dg/bypass-finals, a dev dependency that patches PHP's class loader at runtime to strip the final keyword during tests. It's called in beforeEach() in every test file.

final readonly class Housekeeping

{

public function __construct(

private Client $github = new Client,

) {}

}

This is a trade-off I'd make again - the design integrity of final readonly is worth more than avoiding a dev dependency. The alternative would be making the class non-final just for testability, which is letting the test framework dictate the architecture. That feels backwards to me.

Testing: what worked and what didn't

The test suite splits into two layers. Unit tests mock the GitHub facade directly and verify that the Housekeeping class delegates correctly. Feature tests mock the Housekeeping class itself and verify that the Artisan commands produce the right output and handle errors gracefully.

Laravel's container-based mocking ($this->mock(Housekeeping::class, ...)) made feature testing straightforward. I could simulate any GitHub response without hitting the network, and the commands behaved exactly as they would in production because the DI wiring was identical. Pest's functional syntax kept things readable too - each test is a self-contained scenario with its own setup, which means failures are immediately understandable without tracing through shared state.

Where things got messier was the spin() function from laravel/prompts. It outputs ANSI escape sequences for the spinner animation, and when testing with expectsOutputToContain(), these escape sequences are part of the output buffer. I couldn't reliably assert on output that included spinner text because the ANSI codes made string matching unpredictable. The workaround was to assert on the final output after the spinner completes, not during it - functional but not elegant.

The other pain point was mocking the GitHub API's nested call chains. The real API uses a fluent pattern (GitHub::api('issues')->comments()->all(...)) which means the mock setup requires creating intermediate mock objects and wiring them together. It works, but the test setup for a single API call can be ten lines of mock configuration. I considered extracting this into test helpers but decided against it - the verbosity makes the test's intent explicit, even if it's not pretty.

PHPStan at level 6 (not max)

I run PHPStan at level 6, scanning src/ only. Level max was impractical because graham-campbell/github wraps knplabs/php-github-api, which uses dynamic method resolution extensively. At higher levels, PHPStan flags every interaction with the GitHub client as potentially unsafe, generating dozens of errors that can only be suppressed, not fixed. Level 6 is the sweet spot - it catches real issues (type mismatches, missing return types, unreachable code) without drowning in noise from third-party libraries I can't control.

The phpstan.neon.dist suppresses one known generic template error related to Laravel's collect() helper, and sets reportUnmatchedIgnoredErrors: false so that if the upstream issue gets fixed, CI doesn't suddenly break. Small thing, but it's the kind of defensive configuration that saves you a confused ten minutes six months later.

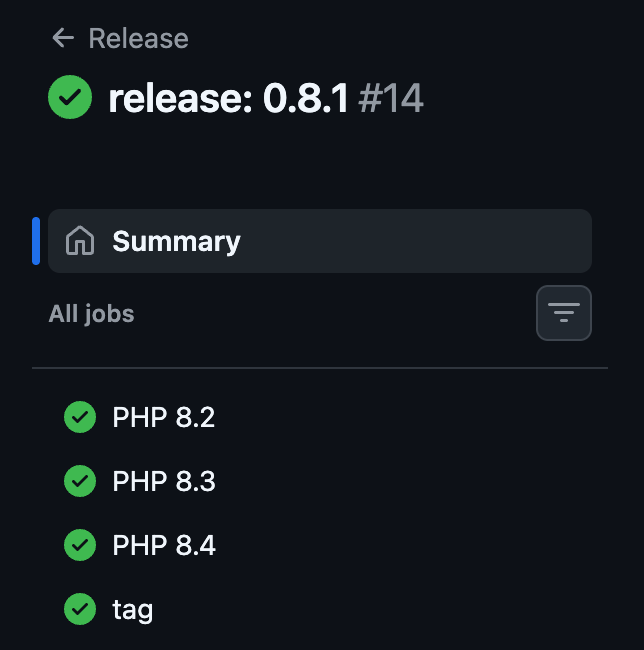

CI and the release workflow

Three GitHub Actions workflows handle quality and releases:

On pull request: Unit tests run across PHP 8.2, 8.3, and 8.4. Fast feedback, no full suite.

On push: Linting (Pint) and static analysis (PHPStan) run. These catch formatting drift and type errors before they accumulate.

On push to main: The full test suite runs across all PHP versions. If the commit message starts with release: X.Y.Z, a second job extracts the version, creates a git tag, and pushes it. Packagist picks up the new tag automatically.

This commit-message-driven release approach means I never manually create tags. The workflow enforces that tests pass before any release is tagged, and the version is explicit in the commit history. It took a couple of iterations to get right - the initial regex for parsing the version from the commit message was too greedy and matched partial strings in longer messages. The fix was anchoring the pattern to the start of the message.

What I'd do differently

The exec() calls for git operations. I used raw exec() with escapeshellarg() for git commands (checkout, pull, branch creation). It works, but Symfony's Process component would give me better error handling, timeout control, and testability. I avoided it initially to keep dependencies minimal, but the package already pulls in Symfony components via Laravel anyway - the dependency cost would have been zero. Lesson learned.

Organisation repository support. The client resolves the authenticated user's login and uses it as the repository owner for all API calls. This means the package only works with personal repositories, which is a limitation I should have addressed from the start. Supporting organisation repos would require accepting an owner parameter or detecting it from the repository's full name. It's on the roadmap, but it's the kind of thing that's much easier to design in from day one than to retrofit.

The recursive navigation pattern. It works for a small number of screens, but if the command grew more complex the call stack depth could become an issue. A proper state machine (even a simple enum-based one) would scale better. For four or five screens it's fine, but I wouldn't extend it further without refactoring first.

The package is still actively developed - there's a roadmap with items like staleness detection (comparing open issues against recent commits to flag ones that may already be resolved) and an AI integration command that generates structured briefs for agentic workflows. Whether all of these make it in remains to be seen, but the foundation is solid enough to build on without major refactoring.

Building this package deepened my understanding of the Laravel ecosystem in ways that simply using it as a consumer doesn't. The service provider pattern, config publishing conventions, the testing infrastructure via Orchestra Testbench - I'd worked with all of these before, but creating a package forces you to engage with them at a level that application development rarely demands. There's no scaffolding to fall back on, so you have to understand the mechanics properly.

If you're a Laravel developer who's never built a package, I'd encourage it. Find a problem in your workflow, scope it tightly, and build it. The learning compounds quickly.

If you're building internal tooling for your engineering team, or you've got a workflow problem that needs a considered solution rather than a quick hack, I've spent close to two decades building and leading engineering teams, including as CTO through a successful acquisition. Get in touch.